And yet Binary Lambda Calculus is hard, in many ways. Working with it as my current phase crush, I encounter some quirks inherent to it. And things that were even stopping me from picking it up at some point. Like the burden of implementation:

BLC is Hard to Implement: Closures

It’s called Lambda Calculus for a reason: BLC is just a representation of lambda terms. BLC semantics are retained from its big sis. Which means: implementing BLC is implementing closures and call stacks. Maybe even add laziness as a built-in? Most Lambda Calculus “programs” use the stack-destroying Y combinator. Or even Omega😰 So one has to re-implement them with Z/Z2 etc. or support lazy/call-by-need semantics.

I always thought that using BLC means implementing one’s own interpreter/reduction engine for it. Why? just a persistent thought. At some point though, I’ve seen that John Tromp, creator of BLC… Uses Haskell to run his programs and compiles this Haskell to BLC for code golfing. So I decided to ease my implementation burden and just use Lisp lambdas as runtime.

BLC is Hard to Write: Optimization and Compilation

Now, you don’t expect me to write all these ones and zeros by hand? Not in the binary form either—my Emacs hex editor setup is not yet there. Given that I already use Lisp runtime for my lambdas, I’m compiling all my bits from a Lisp-like language too. (Though it’s more like Scheme, incidentally.)

Opportunities for hand-crafted optimization are inevitably lost in translation. I need to face that. I have to

- re-shuffle the order of my Lisp functions,

- inline some of the utils

- use

cons destructuring instead of cons accessors ( car ,cdr )- put locally useful functions closer to their use site

Every layer of lambdas adds a bit to every reference to the outside world.

So one has to do this mathematical optimization problem:

put functions where references to them are smallest.

I’m bad at math and optimization problems, but I have a good sense for where

corners can be cut.

So I do cut them.

BLC is Hard to Run: Runtime Implications

But okay, writing BLC programs is easier when you compile them from Lisp. And it removes a whole layer of problems when you have ready-made closures implemented in the language. So it’s all rainbow pony stairway to heaven now? No. Writing functions is not enough, they also have to run in some environment. At the very least, by taking some input and returning output.

That’s where another “standard” choice lurks awaiting unsuspecting programmers. BLC I/O, as defined by John Tromp, is a list of bits. As Church booleans. Or, in the byte mode, I/O is list of lists of booleans, one list per input byte.

So my beautiful functions sorting Church numerals… won’t work with that. Whatever function I write, I have to be ready for the runtime to throw raw bits at it. And I have to make my implementation commit this treachery too!

I did add BLC I/O to my tools. But I’d better write more ivory tower style programs, not bothering about I/O quirks. I sort lists of numbers, the rest doesn’t matter.

BLC is Hard to Scope: Leaky Abstractions

Now, lists of numbers are not exactly the simplest structure to work with. Numbers are repeated compositions. And lists are cons trees awaiting continuations. Not really the type of thing C programmer would use.

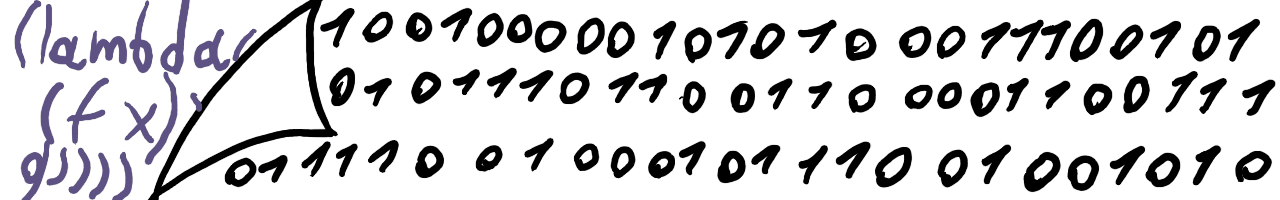

Binary Lambda Calculus is hard to scope and measure. Yes, your sorting algo takes 300 bits. But it bears with it the idea of input. And the input is Church list of Church lists of booleans. Every boolean takes 6/7 bits. Every list adds 6 bits per 6/7 bit element. So a mere "abc" string takes 200 bits:

01010101010101010100001000001100000110000010000010000010000010000011001010101010101000010000011000001100000100000100000100000110000010010101010101010000100000110000011000001000001000001000001100000110

200 bits for simple and barely practical input is too much to ignore. We have to write that in as a cost (paid by a surprised programmer!) BLC is no longer just an optimal complexity measure. It’s also the weight of structures and types.

(And I don’t even mention BLC I/O encoding input model, that’d take twice as much space.)

But then, every program implies a certain runtime convention.

Be it

So yeah, Binary Lambda Calculus has its weird parts.

But it’s also fun!

Optimizing higher-order functions for bit count.

Deranged stuff, smart talk.

So go read on BLC!

And maybe use cl-blc CLI to write and run some BLC too!

BLC is Fun!